RESEARCH INTERESTS

Groups are algebraic structures which arise naturally in contexts where there is symmetry of some sort. Historically groups have been an important tool in understanding many areas of mathematics, and in particular geometry. Geometric group theory reverses this relationship, using the tools of geometry and topology to study groups. Often seen as quite a young area of maths, coming to the fore in the late 1970's and 80's with the work of people like Gromov, it has its roots in the work of Max Dehn at the start of the 20th century.

My research centres around a few topics, including Coxeter groups, Nielsen equivalence, accessibility of groups, and applying geometry to machine learning problems.

Coxeter groups

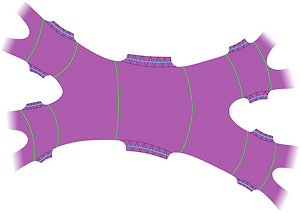

I first came across Coxeter groups in my masters dissertation, which was supervised by Dr. Pavel Tumarkin at Durham University. These are groups which are generated by reflections and so have particularly strong connections with geometry, indeed they turn up all over mathematics. I have worked in particular on the connection between the geometry of Coxeter groups and the smoothness of certain varieties which live in flag manifolds, in a project supervised by Prof. Konni Rietsch at King's College London.

Nielsen equivalence

Generating sets of a group G of size n are in one-to-one correspondence with the surjective homomorphisms from the free group on n generators to G. Two sets of generators for G are called Nielsen equivalent if they differ by an automorphism of the free group. It has been shown that all generating sets of surface groups of the same size are Nielsen equivalent. I am studying Nielsen equivalence for Coxeter groups (known not to hold in general), and other related classes of groups.

Geometry and machine learning

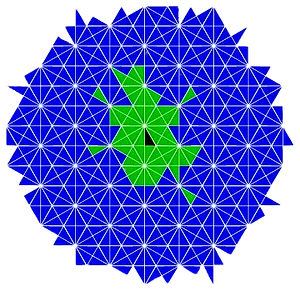

Applications of machine learning have become ubiquitous, roughly speaking you have some very complicated function (for example one that takes in photographs of pets and categorises them as being cats, dogs, or gerbils), and you want to teach a computer how to approximate this function as closely as possible. Suppose the function is invariant under the action of a group on the input data (for example an upside down picture of a dog is still a picture of a dog, so there is invariance under the group of rotations). One can use the geometry of these group actions to design more accurate and efficient machine learning systems.

Accessibility of groups

Bass-Serre theory tells us how to write a group as an amalgamated product or HNN extension by studying how the group acts on simplicial trees. A natural question to ask is whether there is an upper bound on the number of times you can split a group in either of these ways. If there is the group is said to be accessible. Accessibility is the first step towards building JSJ-decompositions of groups. I started thinking about this topic in a project supervised by Dr. Lars Louder at UCL.